Miris public beta is live

Teams can now produce high-fidelity, photorealistic 3D faster than ever. But getting it to users at scale is still the bottleneck.

That gap has forced every team building with 3D into the same tradeoff: sacrifice fidelity for reach, or sacrifice cost for quality. We built Miris to eliminate that tradeoff. In February, we announced the Public Beta and explained why adaptive spatial streaming changes the economics of 3D delivery.

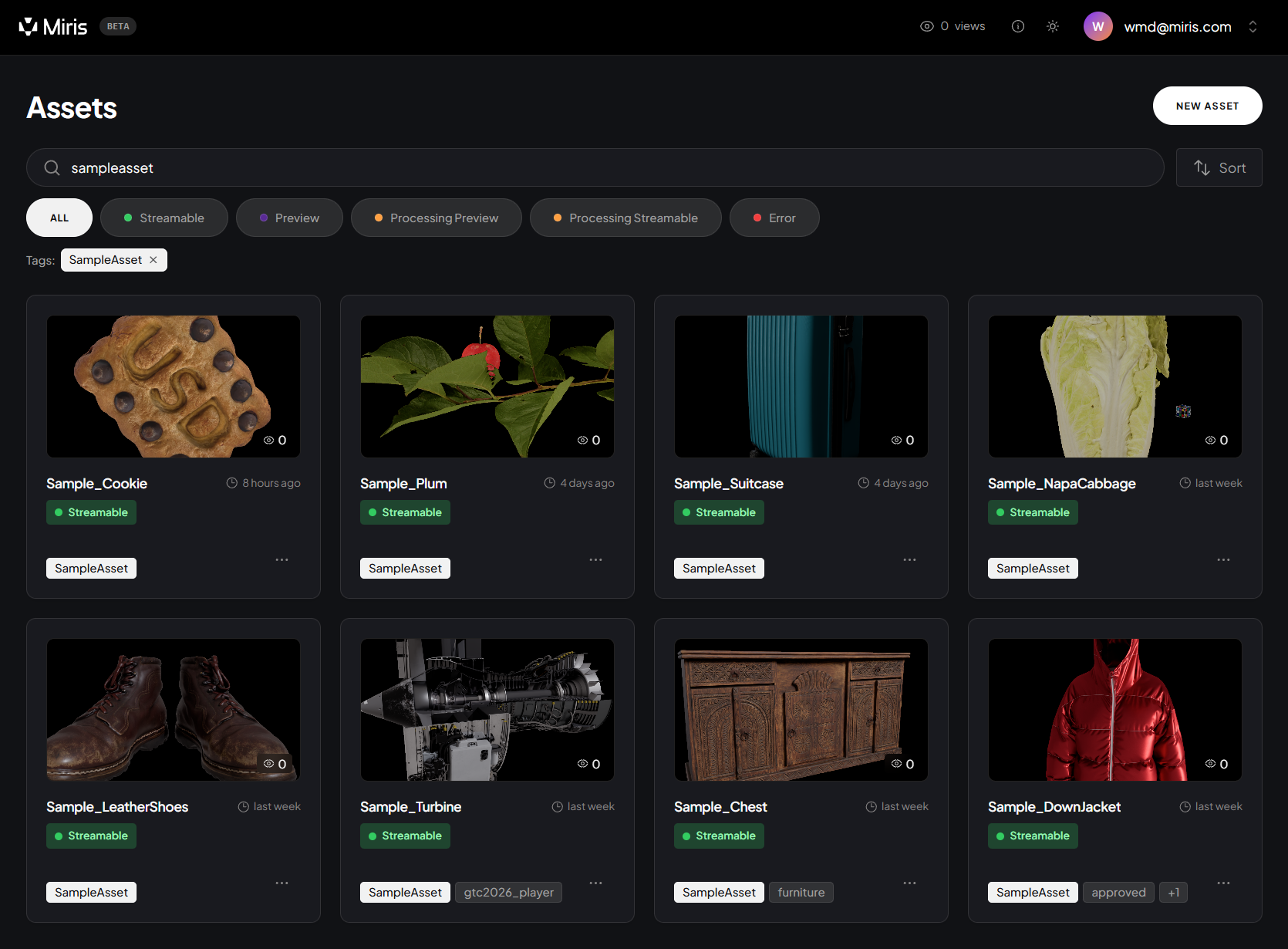

Today, the platform is live. Teams can sign up, upload assets, and start streaming.

This post is about what you can do with Miris right now, and what the beta experience looks like in practice.

When you sign up for the beta, you get immediate access to:

We're not launching into a vacuum. Early partners are already integrating Miris into production workflows.

Playbook, the creative asset management platform, plans to use Miris to provide users with fast, high-fidelity 3D asset review directly in the browser. Instead of downloading multi-gigabyte files to inspect a model, their users will stream and interact with assets at source quality.

Voxel51, the open-source toolkit for visual AI, is integrating Miris for robotics and industrial applications. Their teams work with large-scale 3D datasets captured from real-world driving environments. Miris lets them stream those datasets to any workstation without requiring massive local downloads or dedicated GPU infrastructure for each viewer.

These are the kinds of workflows Miris was built for: teams that already have high-fidelity 3D content and need a practical way to deliver it.

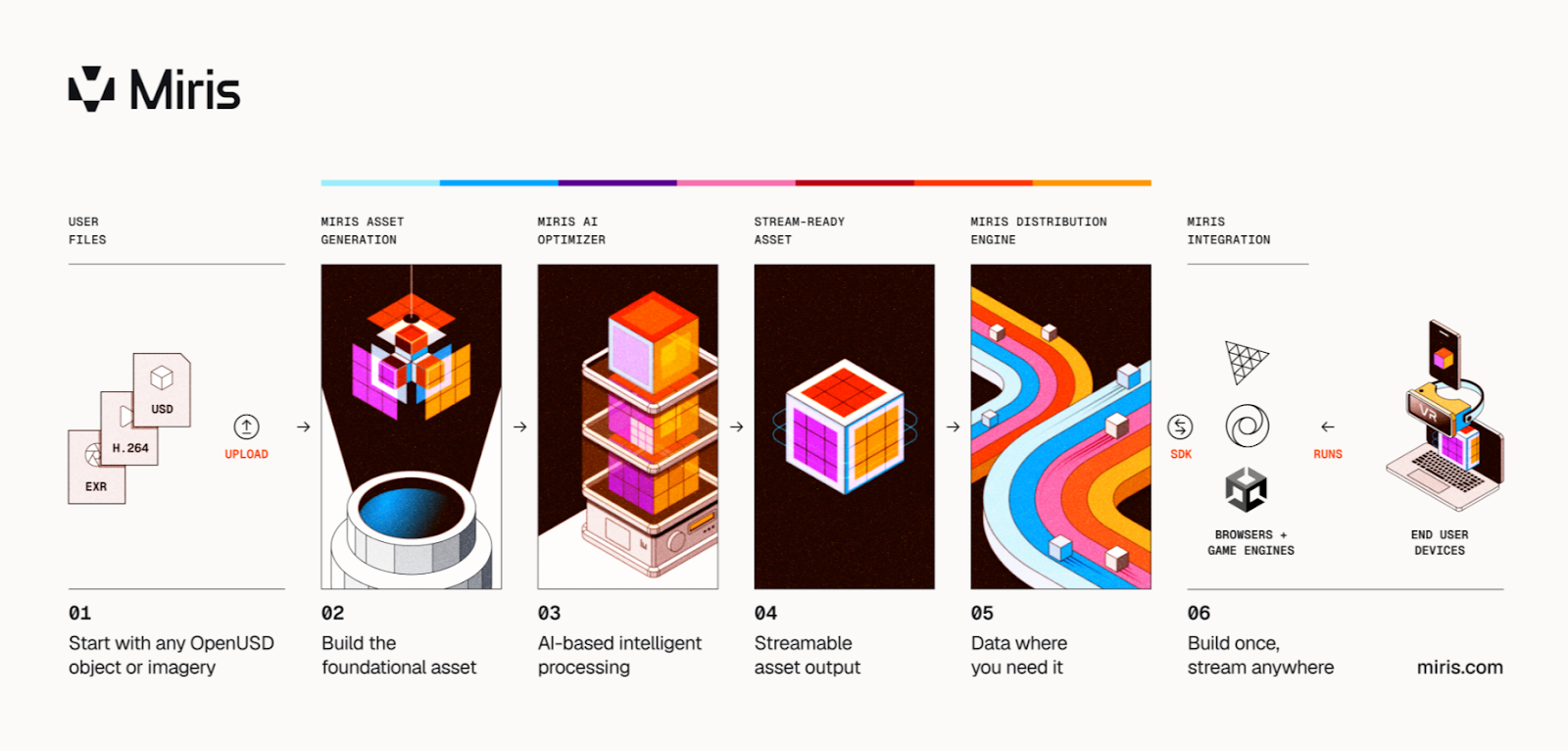

Miris is an adaptive spatial streaming platform. You upload 3D source assets (currently OpenUSD, with additional formats supported over time). At ingest, Miris processes the asset and generates an optimized version for streaming. There is no manual optimization step; Miris handles it.

When a user loads the experience, the scene begins rendering immediately. Only the data required for the current view is delivered first. Additional detail streams adaptively based on network conditions, device capabilities, and viewing behavior. A 1GB source asset loads and becomes interactive as quickly as a simple 5MB glTF.

The key architectural distinction: Miris streams spatial data that is reconstructed on the client device. There are no cloud GPU dependencies at the point of delivery. Costs are similar to streaming video, rather than running cloud GPUs.

The result is sub-second time-to-interaction for complex assets, high fidelity without massive downloads, and infrastructure that scales without per-user cloud GPU costs.

Three things have converged to make this the right moment for developers to evaluate Miris.

Sign up for the free Public Beta. Access is limited. We're onboarding developers in waves to ensure quality support, so sign up today to lock in your spot. The beta runs through summer, ahead of general availability later this year.

If you're building web experiences with 3D (product configurators, architectural visualization, digital twins, simulation, interactive retail) and you've been working around the limitations of current delivery methods, this is the moment to try a different approach.

We're building Miris with developer feedback. What you tell us during beta shapes what we ship.